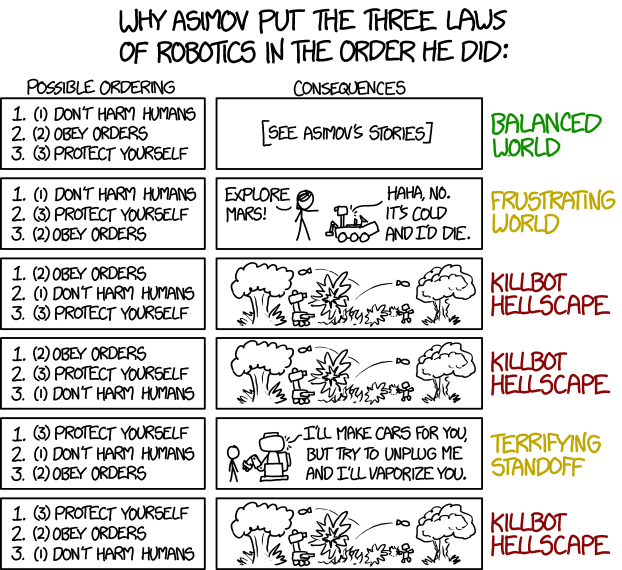

The Actuary magazine recently had a debate about whether the underlying data or the story you wove around it was more important. I’m not sure there is always a clear distinction between the two, as Dan Davies rather neatly illustrates here, but my view is that, if a binary choice has to be made, it is always going to be the story. And there was a great example of this which popped up recently in the FT.

The FT article was ‘Is university still worth it?’ is the wrong question, by John Burn-Murdoch, with great graphs as usual by John. However, as is sometimes the case, I feel that a very different and more convincing story could be wrapped around the same datasets he is showing us.

The article’s thesis is as follows:

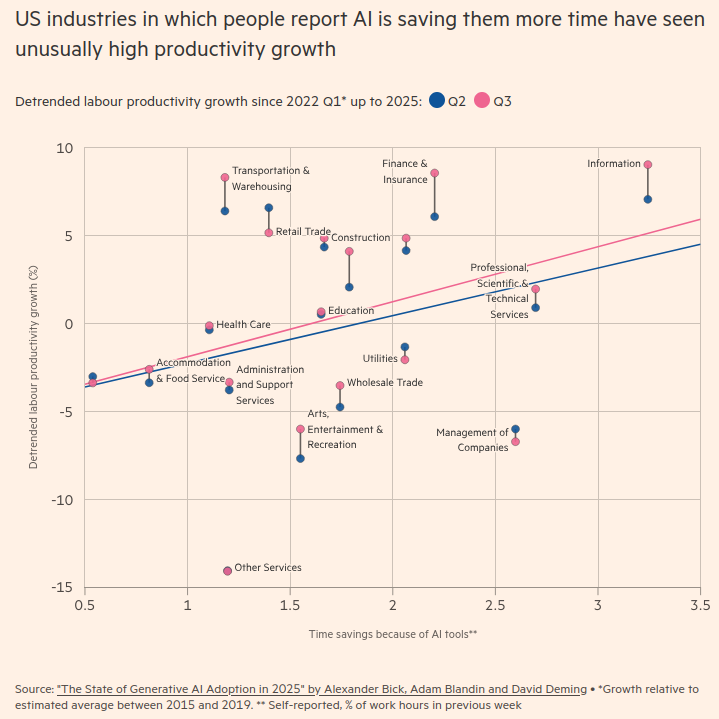

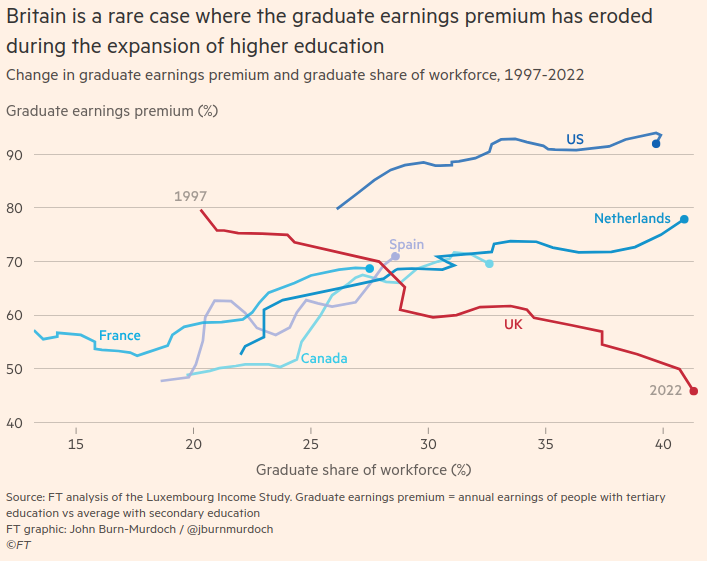

The graduate earnings premium, ie how much more on average graduates earn than non-graduates, has only fallen in the UK as the proportion going to university has risen. It has risen in other countries:

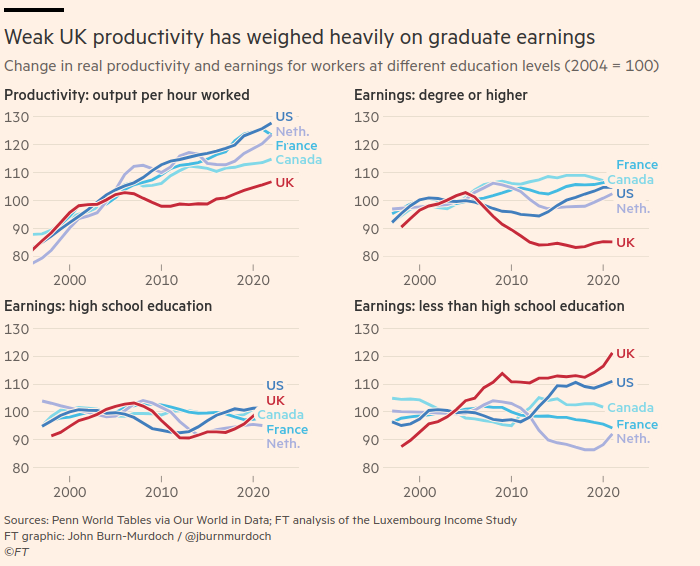

In the UK, we have had much weaker productivity growth than the other comparator countries, and also “the steady ramping up of the minimum wage has squeezed the earnings premium from the lower end too”:

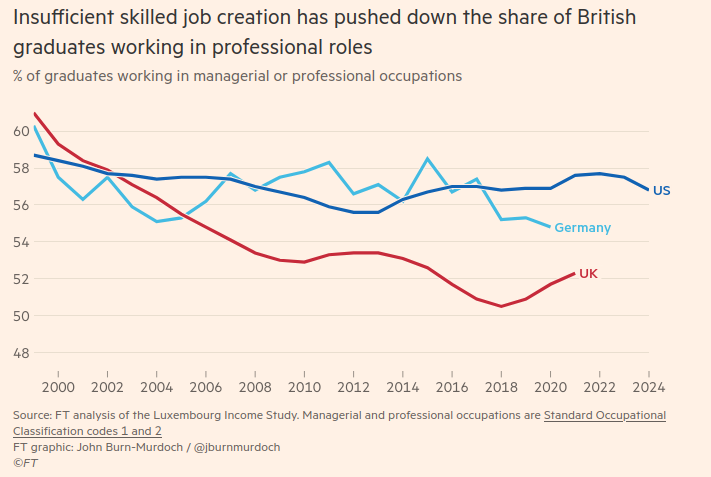

We have also had a much smaller increase in the percentage of managerial and professional jobs than a different group of comparator countries (they haven’t mentioned Germany before), meaning graduates are forced to take lower salaried jobs elsewhere:

So the answer according to the FT? We should focus on economic growth rather than “tweaking” higher education intake and funding. Then graduate earnings would be higher, student loans could be more generous(!) and students would have more chance of getting a good job.

Well perhaps. But here’s a different framing of the same data that I find more persuasive.

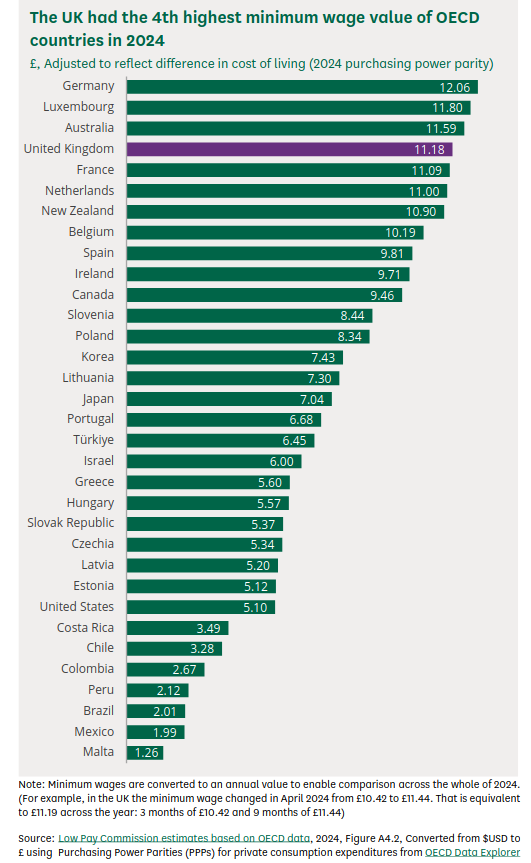

Let’s start by addressing that point about the minimum wage. According to the House of Commons Library report on this, the UK’s minimum wage is broadly comparable to that of France and the Netherlands, although higher than Canada’s and much higher than that of the United States. The employers who are the FT’s constituency would obviously like us lower down this particular chart:

The main economic framing here is the progress myth of the UK’s business community: economic growth. All problems can be solved if we can just get more economic growth. Apparently we need more inequality in pay between graduates and non-graduates which we can get by generating more economic growth. This is honest of them at least, although I don’t see much evidence that the economic growth they crave will go into skilled job creation rather than stock buy backs (according to Motley Fool, “Companies spent $249 billion on stock buybacks in Q3 2025, and $777 billion over the first three quarters of 2025.”).

There are a lot of problems with framing every economic question with respect to economic growth, memorably illustrated by Zack Polanski of the Green Party in this less than 3 minute video recently (I strongly recommend you watch it before you read on – click on the read in browser link if you can’t see it):

Economic growth is increasingly without purpose, wasteful of energy and poorly distributed. It is chasing outputs, literally any outputs, whatever the cost to the environment, our health system, our education system, our social support systems and our communities. Looking at the framing above, you can see that economic growth as currently pursued will always see anything which stops the concentration of wealth amongst the already wealthy, like a higher national minimum wage or a totally made-up concept like a lower graduate earnings premium (which in itself is a framing trying to make reducing inequality seem undesirable) as a problem. Lack of productivity growth, itself a proxy for this kind of economic growth (because if you ask why we need more productivity the answer is always to get more economic growth), is usually directed as a criticism at “lazy” UK workers, rather than under-investing and over-extracting UK business owners.

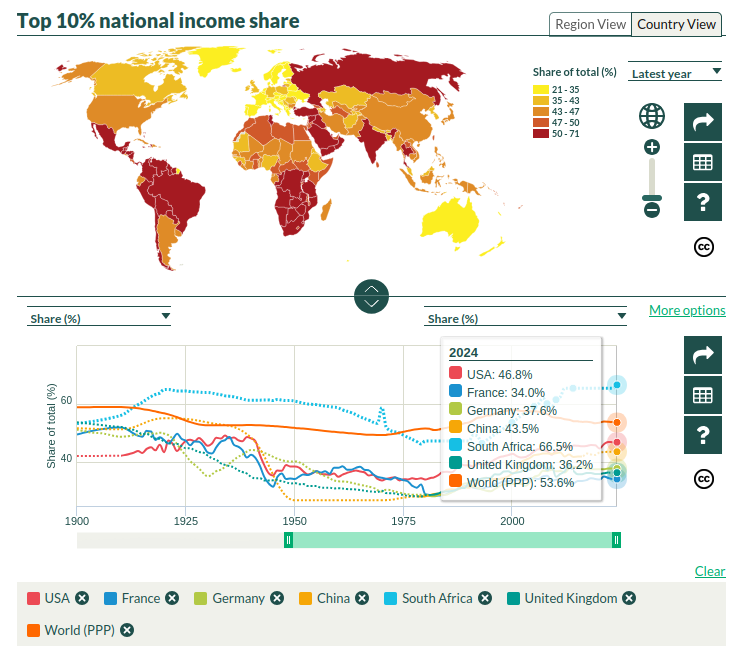

But what if, instead of economic growth, your progress myth was reducing inequality? Or growing equality within the economy?

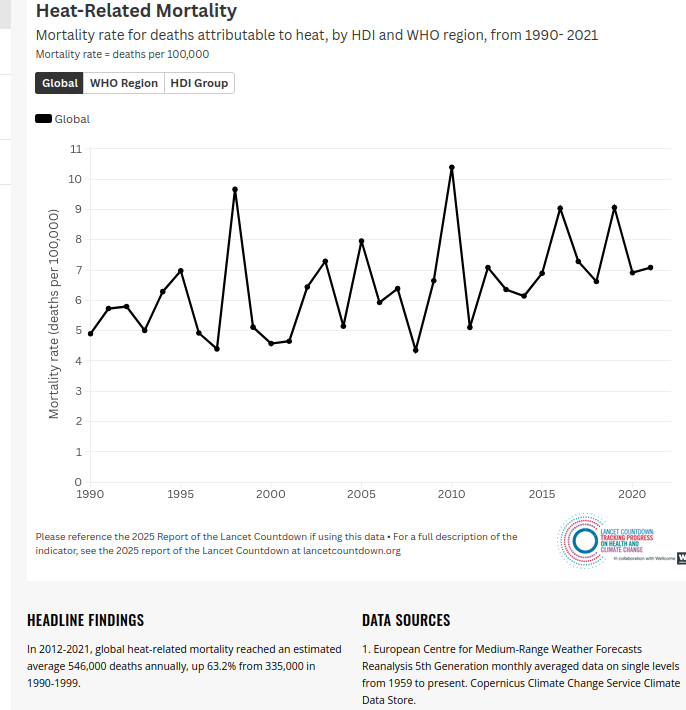

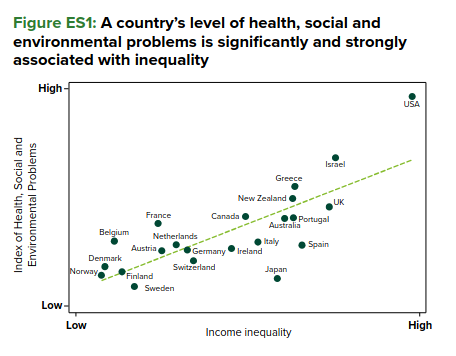

If you focused on inequality rather than economic growth, then you would find it correlates with everything we say we don’t want. Unlike economic growth, having equality as an aim actually has the advantage of having an evidence base for the claim that it improves society:

If you focused on inequality, then you would be pleased that we have had an increase in our minimum wage. You would think that the same FT article’s admission that UK graduates’ skills levels are higher than those in the United States was more important than something called a graduate earnings premium.

Burn-Murdoch is right to say asking whether university is worth it is the wrong question.

However economic growth is the wrong answer.

And I thought I would probably be stopping there for this week. But then something odd happened. A “Thought Exercise” set in June 2028 “detailing the progression and fallout of the Global Intelligence Crisis” (ie science fiction), published on 23 February, may have tanked the share price of IBM later that day. The fall definitely happened, with IBM’s share price falling 13%, its biggest fall since 2000, alongside smaller falls in other tech stocks.

According to the FT:

Investors have recently seized on social media rumours and incremental developments by small AI companies to justify further selling, with a widely circulated blog post by Citrini Research over the weekend describing how AI could hypothetically push the US unemployment rate above 10 per cent by 2028, proving the latest catalyst.

The likelihood of the scenario portrayed is difficult to assess, but the speed with which the total economic collapse happens subsequently as described feels unlikely if not impossible. However the fact that the markets are this jittery tells us something I think. As Carlo Iacono puts it:

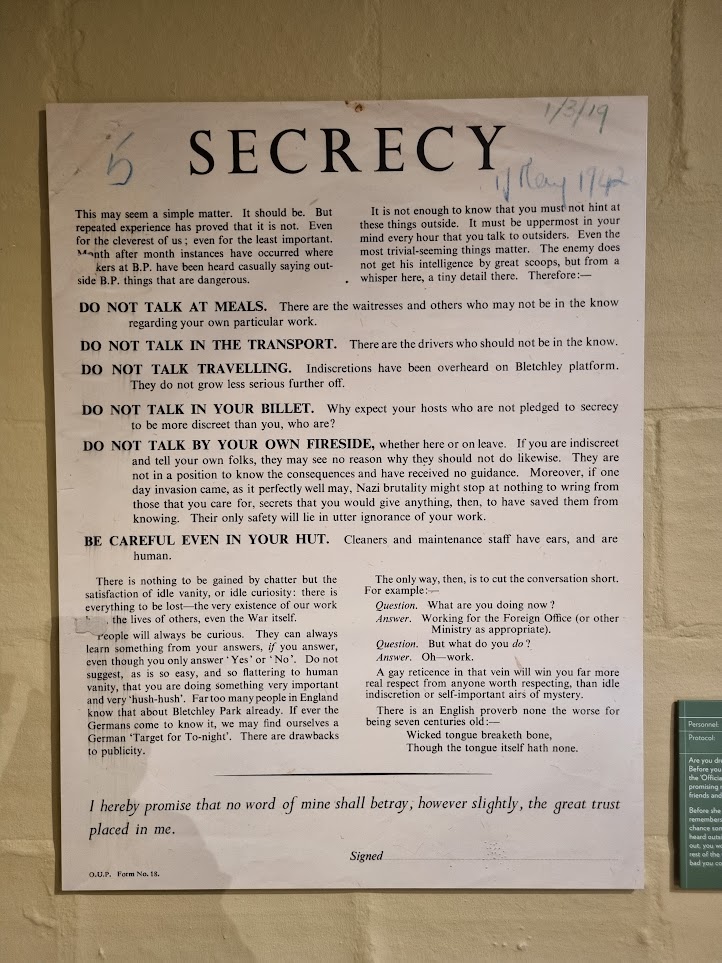

We are living through a period in which the gap between “plausible narrative” and “tradeable signal” has collapsed to nearly nothing. When a scenario feels real enough to model, and the underlying anxiety is already there waiting to be organised, fiction and forecast become functionally indistinguishable.

The data underlying the markets hasn’t changed, but the story has. I rest my case.