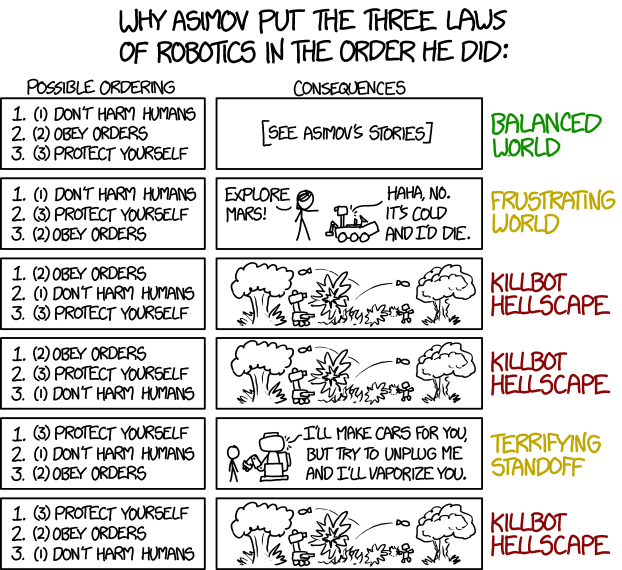

The “black box” was a constant refrain when I was working as an actuarial consultant. It was where the results from a process were being accepted without any understanding of how they were arrived at. Something we felt that any self-respecting actuarial consultant should challenge in their own work and everybody else’s.

However when you came to actually present analysis or arguments to a client, you expected a certain amount of that expertise to be taken as read, to effectively be inside a black box as far as the client was concerned. They couldn’t be expected to understand all of the aspects of what you were talking about, otherwise they wouldn’t need you. Good practice was always to put them in a position where they could understand and make decisions about the key aspects of your advice without needing to engage with the other parts. As the expert, you decided what was in the black box.

Now the black box is back with a vengeance for all the professionals who have relied upon them in their working lives. As Dan Davies puts it:

The same black-box property which stops you from being second guessed or overruled means that nobody is interested in your explanations for your decisions; it is definitional of being a black box that you are going to be judged by results.

And, if you are in the business of advising in the teeth of uncertainty, as actuaries are, then this is likely to be a real problem. If noone cares how your advice was constructed, but they can get advice that ticks the compliance box your client has to complete more cheaply and quickly than you can, the more automated black box is going to win the business. The experienced professional still has a role in managing this process, verifying the results coming out of the black box and determining what can still be kept out of the black box, but he may be increasingly struggling to justify the cost of his junior colleague.

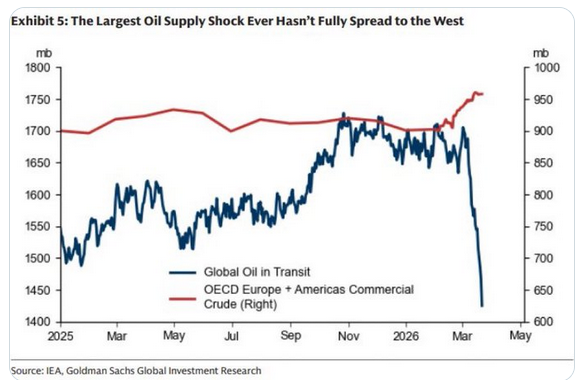

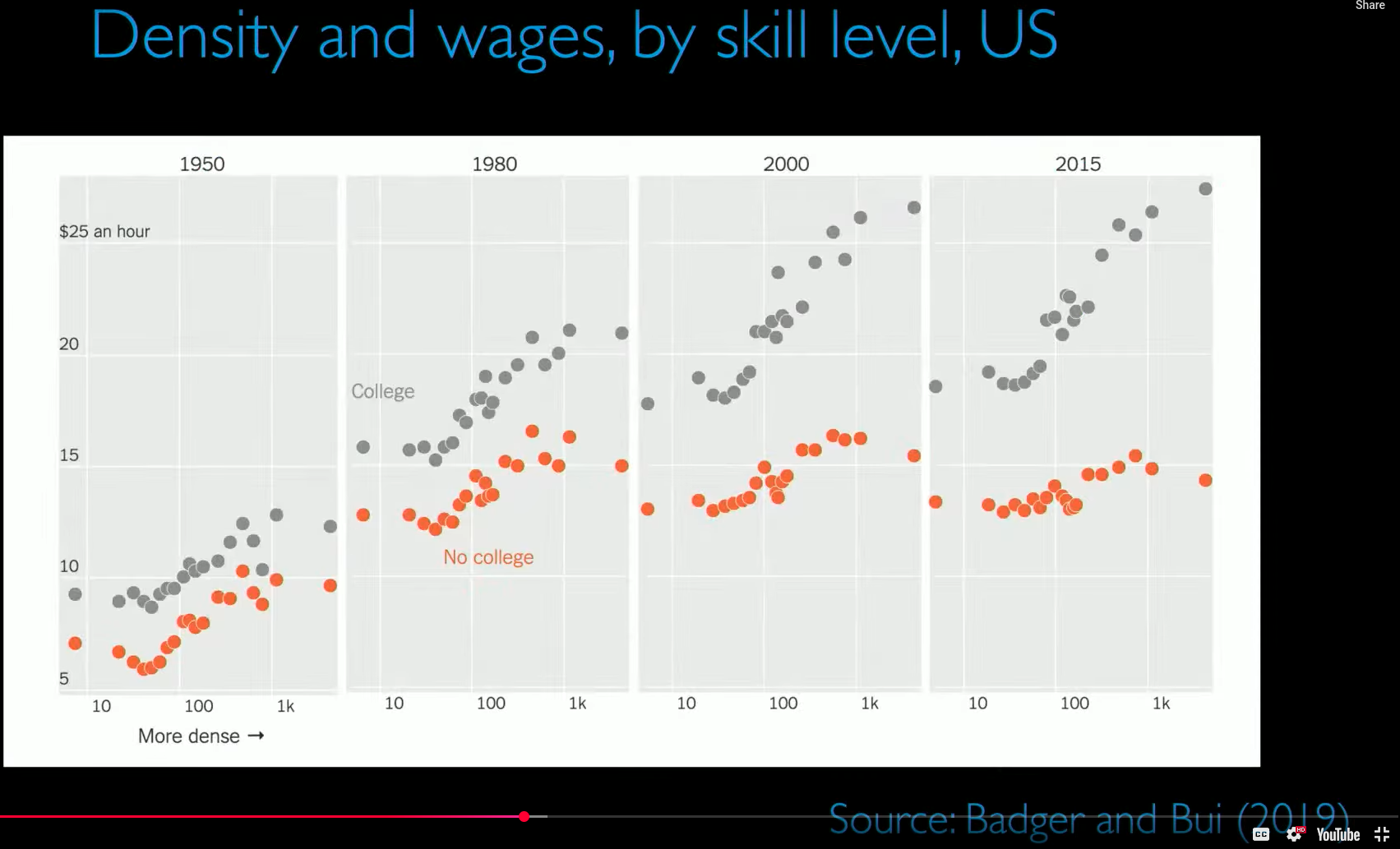

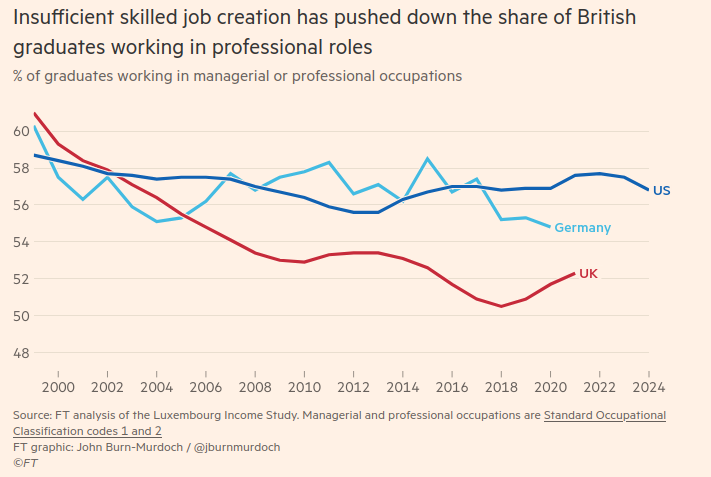

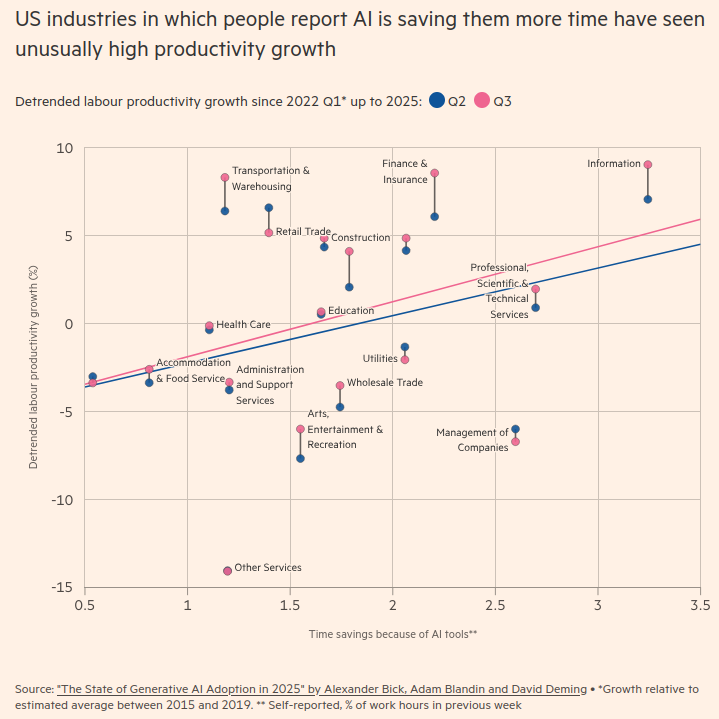

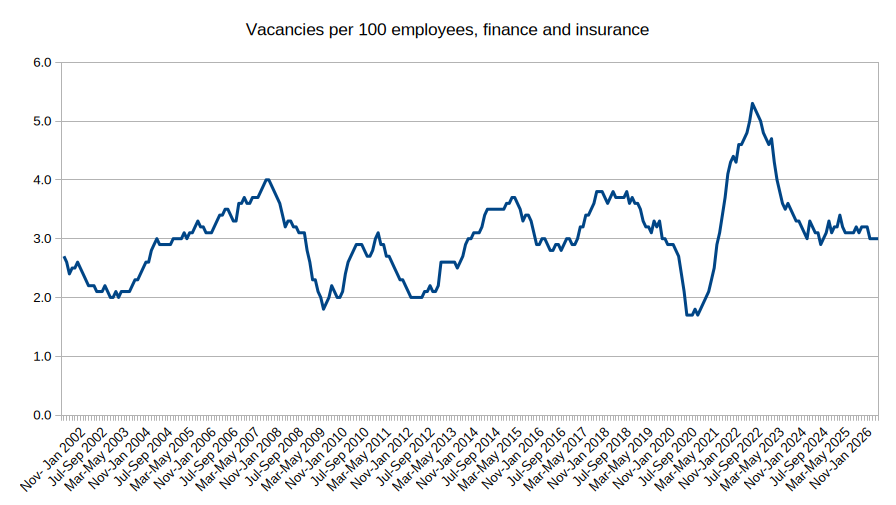

I wrote about how devastating the fall in graduate job listings was 9 months ago, so where have we got to since?

Well things don’t look so bad in the UK right now according to the Office for National Statistics (ONS), reverting to close to the average after a post pandemic surge in the finance and insurance sector:

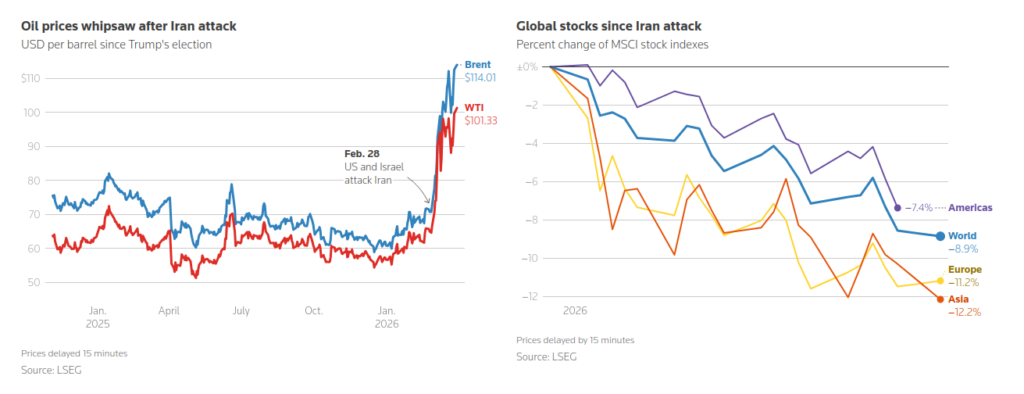

However, if we look at the United States, which tends to show us where the UK finance sector is going, it looks far more ominous:

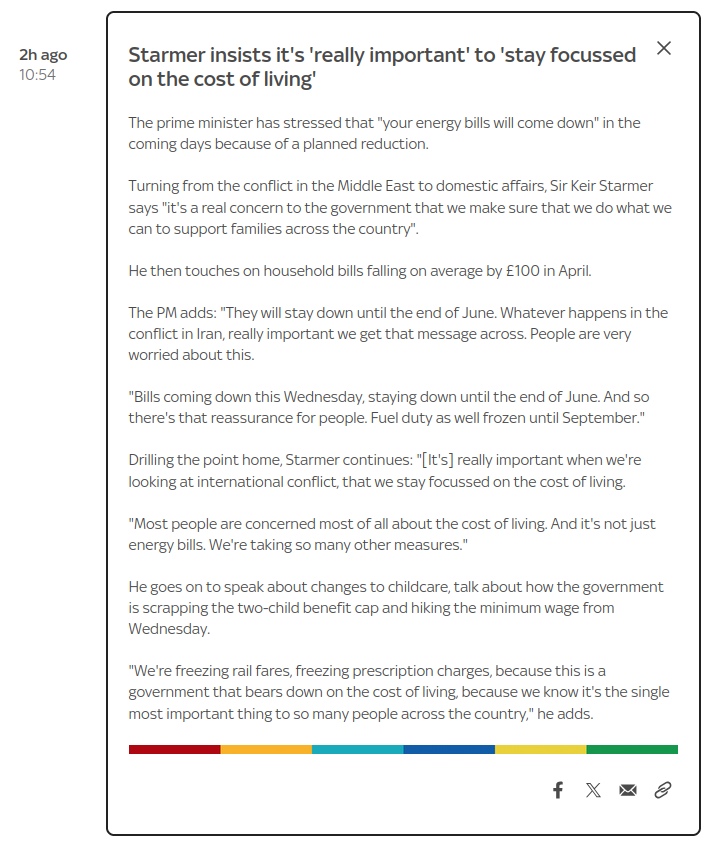

Yesterday Sky News ran a story about Standard Chartered‘s CEO who, in his desperation not to describe over 7,500 job losses as cost cutting, said this:

It’s not cost-cutting. It’s replacing in some cases lower-value human capital with the financial capital and the investment capital we’re putting in.

We may need to sit with that statement for a little while.

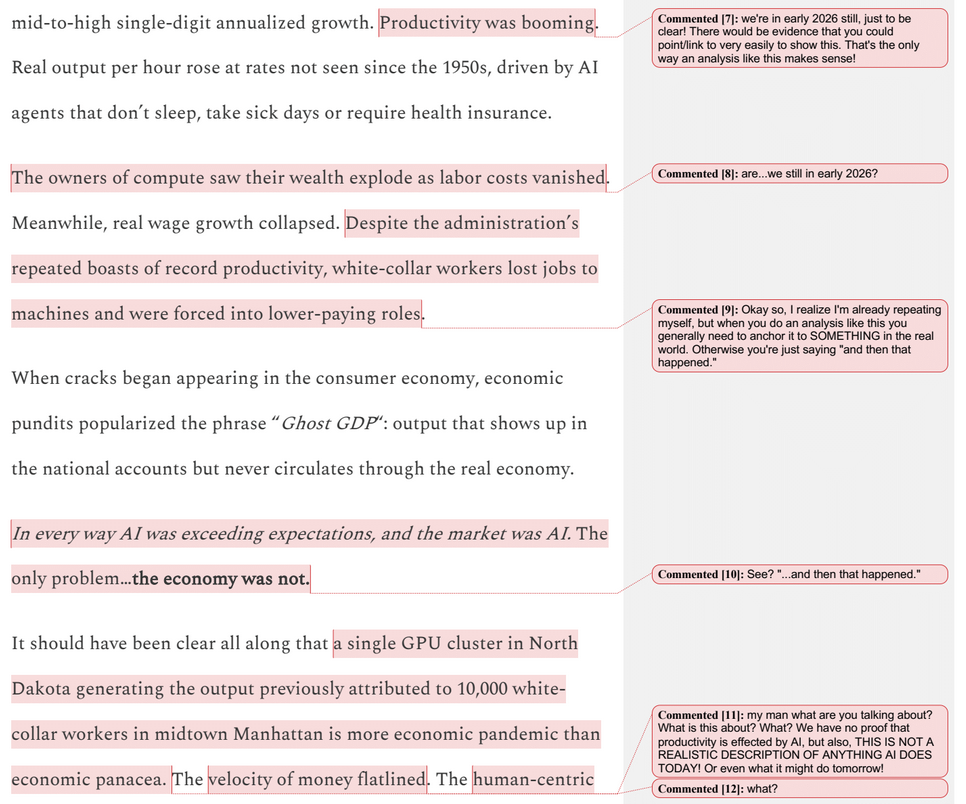

Daniel Susskind talks about this risk in his latest lecture entitled A World Without Work: in summary, to paraphrase only slightly, sure relatively junior white collar roles may already be particularly hard hit by AI, but he is optimistic because of the impact on GDP and we cannot pause because of China. He then goes on to talk about the four problems he sees for a post AI future:

- Distribution (replacing wages);

- Contribution (how do you “pull your weight”);

- Power (domination by Big Tech on economics, politics, liberty, social justice and democracy); and

- Meaning (fulfilment in life).

Susskind has gone from thinking that the fear that AI is coming for your jobs was overblown and that it was just task encroachment that we faced, to now thinking that it may encroach on all the tasks in most fields. Jevons Paradox (that technological innovation that increases the efficiency of a resource’s use leads to a rise in consumption of that resource) is no comfort if that new demand is robot-met.

Carlo Iacono suggests that the move of junior roles to AI may be subtle to begin with:

The weakness among young workers may appear as fewer people entering employment from outside the workforce. Firms may not fire large numbers of juniors; they may simply hire fewer of them.

That matters. The labour market can look healthy while the entry path narrows. Senior workers stay employed. Output rises. Productivity improves. There is no dramatic wave of redundancies.

Yet the first rung is being taken out.

It may also be masked by the fact that there remains a shortage for actuaries beyond the entry roles. There is almost a hint of desperation to approaches like this looking for introductions from a retired actuary like myself:

(followed by a list of clients he is working for)

And, even if you think the risk of the AI Bubble bursting soon, taking down the global stock markets underpinned by the Magnificent 7, is exaggerated, you do need to be suspicious about the current abilities of AI to replace junior staff. My experience with another, somewhat earlier, actuarial technology, the pensions valuation engine, would suggest that the outputs need to be analysed very carefully before sharing with a client: it often had dependencies between what should have been independent variables hidden in the programming, or vagaries in the setup which left out non-standard benefit rules for your particular scheme, for instance. Or the student who had set it up initially (a complicated process usually) might have made a mistake or you might have not communicated with them very well to start with. Or a hundred other things.

For whatever reason, there was often still a lot to do after the valuation engine had produced some output.

Can this sort of thing happen with agentic AI? Well think about that student programming the valuation engine, but on steroids. Its patchy capabilities combined with its basic psychopathy leads to, as Hannah Fry entertainingly demonstrates here, some serious problems arising with the agent’s relentless to and fro with the large language models it depends upon, asking them what it should do next. As Hannah says:

I built an AI agent. She opened a shop selling novelty mugs, emailed a journalist without being asked, and then leaked our passwords to a total stranger.

As Kyle Kingsbury wrote about having an AI agent as a colleague in a programming team:

Imagine a co-worker who generated reams of code with security hazards, forcing you to review every line with a fine-toothed comb. One who enthusiastically agreed with your suggestions, then did the exact opposite. A colleague who sabotaged your work, deleted your home directory, and then issued a detailed, polite apology for it. One who promised over and over again that they had delivered key objectives when they had, in fact, done nothing useful. An intern who cheerfully agreed to run the tests before committing, then kept committing failing garbage anyway. A senior engineer who quietly deleted the test suite, then happily reported that all tests passed.

You would fire these people, right?

Yet despite all this, the money continues to pour in to the construction of AI infrastructure. There are already websites up and running for all of the parts of tasks AI cannot encroach upon.

The bottom rung of the actuarial ladder is clearly in danger. This is a particular problem for the actuarial profession, which has traditionally relied on longer periods of work-based training for its future qualified actuaries than many other professions. Training to become an actuary takes a long time. Median time to fellowship is still around six years, with some taking up to ten or giving up. The exams are hard to pass. There have been attempts by the profession to tackle some of these disincentives: the Chartered Actuary designation to make a destination of the generalist qualification before the specialisation of the fellowship, championed on this blog and launched in the teeth of opposition by some fellows, being one example.

It has led to a culture within actuarial firms around managing the extended time in training, with rituals around study leave and results days. One of the fears expressed in opposition to the introduction of the Chartered Actuary designation was that, if this could be achieved almost entirely within formal education at universities, the value of working alongside experienced actuaries would be lost.

It has led to a culture within the profession itself of managing large parts of its education system in house. Half of its revenue and around 30% of its expenditure are on “pre-qualification learning and development”. Sometimes it looks more like an education business with a professional side hustle.

But then the new AI toys have come along, and it turns out that many of those experienced actuaries may be less keen on graduates coming in and needing supervision from them after all. Many of them may rather spend hours on AI prompts than on developing another human being.

I fear that, increasingly, companies are not going to accommodate actuarial students in their work plans without significant persuasion. And, if the number of students studying while in work falls, the profession itself is going to struggle to finance its own bespoke education system at an acceptable cost to its members.

It will be hard for the profession to challenge this too: it is going to be good for many of those already established in their roles as the market for more experienced actuaries, when the market has no interest in developing the actuaries of the future, becomes increasingly competitive.

If the actuarial profession does accept the challenge of protecting the pipeline of future experienced actuaries it will need to review its entire education syllabus through this lens. It will also need to engage with other partners involved in what is in effect a problem of capital formation and collective action: government incentives may be needed to encourage firms to continue to train early career professionals and discourage free-riding. There may be no way back for the student with no actuarial qualifications learning on the job. The universities may be needed to plug people in at a different career point, which will require them to innovate themselves even further into the professional training role than ever before. As Carlo Iacono points out:

educational institutions may be pushed to simulate more of the apprenticeship environment. That does not mean adding a thin “AI literacy” module. It means creating settings where students practise judgement under uncertainty, in realistic workflows, with feedback that is close enough to hurt and useful enough to teach.

It will not be at all easy. But the alternative is a future without opportunity for those who do not already have it and an ageing profession withering on the vine it refused to nurture.