In 2017, I was rather excitedly reporting about ideas which were new to me at the time regarding how technology or, as Richard and Daniel Susskind referred to it in The Future of the Professions, “increasingly capable machines” were going to affect professional work. I concluded that piece as follows:

The actuarial profession and the higher education sector therefore need each other. We need to develop actuaries of the future coming into your firms to have:

- great team working skills

- highly developed presentation skills, both in writing and in speech

- strong IT skills

- clarity about why they are there and the desire to use their skills to solve problems

All within a system which is possible to regulate in a meaningful way. Developing such people for the actuarial profession will need to be a priority in the next few years.

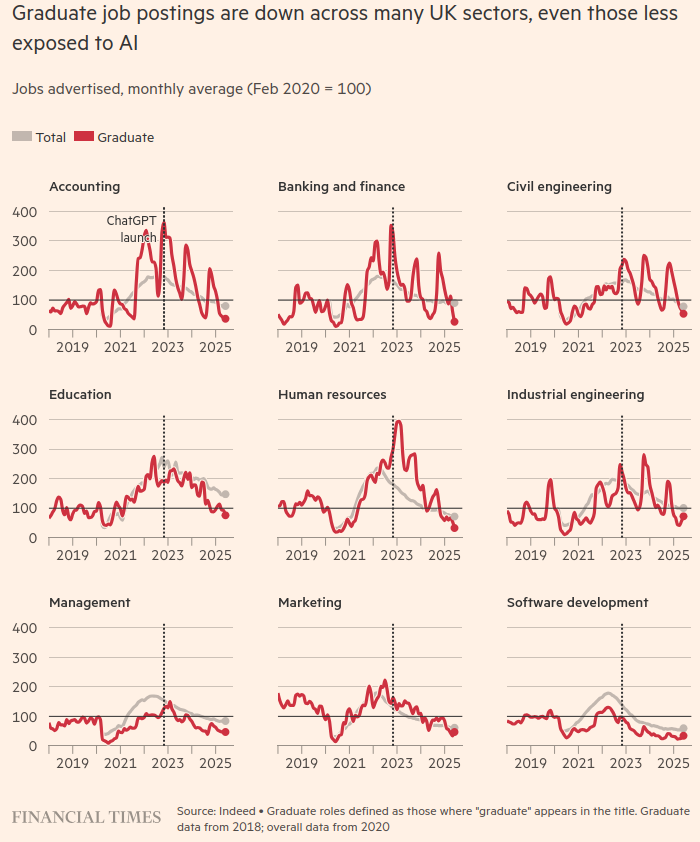

While all of those things are clearly still needed, it is becoming increasingly clear to me now that they will not be enough to secure a job as industry leaders double down.

And perhaps even worse than the threat of not getting a job immediately following graduation is the threat of becoming a reverse-centaur. As Cory Doctorow explains the term:

A centaur is a human being who is assisted by a machine that does some onerous task (like transcribing 40 hours of podcasts). A reverse-centaur is a machine that is assisted by a human being, who is expected to work at the machine’s pace.

We have known about reverse-centaurs since at least Charlie Chaplin’s Modern Times in 1936.

Think Amazon driver or worker in a fulfillment centre, sure, but now also think of highly competitive and well-paid but still ultimately human-in-the-loop kinds of roles being responsible for AI systems designed to produce output where errors are hard to spot and therefore to stop. In the latter role you are the human scapegoat, in the phrasing of Dan Davies, “an accountability sink” or in that of Madeleine Clare Elish, a “moral crumple zone” all rolled into one. This is not where you want to be as an early career professional.

So how to avoid this outcome? Well obviously if you have other options to roles where a reverse-centaur situation is unavoidable you should take them. Questions to ask at interview to identify whether the role is irretrievably reverse-centauresque would be of the following sort:

- How big a team would I be working in? (This might not identify a reverse-centaur role on its own: you might be one of a bank of reverse-centaurs all working in parallel and identified “as a team” while in reality having little interaction with each other).

- What would a typical day be in the role? This should smoke it out unless the smokescreen they put up obscures it. If you don’t understand the first answer, follow up to get specifics.

- Who would I report to? Get to meet them if possible. Establish whether they are technical expert in the field you will be working in. If they aren’t, that means you are!

- Speak to someone who has previously held the role if possible. Although bear in mind that, if it is a true reverse-centaur role and their progress to an actual centaur role is contingent on you taking this one, they may not be completely forthcoming about all of the details.

If you have been successful in a highly competitive recruitment process, you may have a little bit of leverage before you sign the contract, so if there are aspects which you think still need clarifying, then that is the time to do so. If you recognise some reverse-centauresque elements from your questioning above, but you think the company may be amenable, then negotiate. Once you are in, you will understand a lot more about the nature of the role of course, but without threatening to leave (which is as damaging to you as an early career professional as it is to them) you may have limited negotiation options at that stage.

In order to do this successfully, self knowledge will be key. It is that point from 2017:

- clarity about why they are there and the desire to use their skills to solve problems

To that word skills I would now add “capabilities” in the sense used in a wonderful essay on this subject by Carlo Iacono called Teach Judgement, Not Prompts.

You still need the skills. So, for example, if you are going into roles where AI systems are producing code, you need to have sufficiently good coding skills yourself to create a programme to check code written by the AI system. If the AI system is producing communications, your own communication skills need to go beyond producing work that communicates to an audience effectively to the next level where you understand what it is about your own communication that achieves that, what is necessary, what is unnecessary, what gets in the way of effective communication, ie all of the things that the AI system is likely to get wrong. Then you have a template against which to assess the output from an AI system, and for designing better prompts.

However specific skills and tools come and go, so you need to develop something more durable alongside them. Carlo has set out four “capabilities” as follows:

- Epistemic rigour, which is being very disciplined about challenging what we actually know in any given situation. You need to be able to spot when AI output is over-confident given the evidence, or when a correlation is presented as causation. What my tutors used to refer to as “hand waving”.

- Synthesis is about integrating different perspectives into an overall understanding. Making connections between seemingly unrelated areas is something AI systems are generally less good at than analysis.

- Judgement is knowing what to do in a new situation, beyond obvious precedent. You get to develop judgement by making decisions under uncertainty, receiving feedback, and refining your internal models.

- Cognitive sovereignty is all about maintaining your independence of thought when considering AI-generated content. Knowing when to accept AI outputs and when not to.

All of these capabilities can be developed with reflective practice, getting feedback and refining your approach. As Carlo says:

These capabilities don’t just help someone work with AI. They make someone worth augmenting in the first place.

In other words, if you can demonstrate these capabilities, companies who themselves are dealing with huge uncertainty about how much value they are getting from their AI systems and what they can safely be used for will find you an attractive and reassuring hire. Then you will be the centaur, using the increasingly capable systems to improve your own and their productivity while remaining in overall control of the process, rather than a reverse-centaur for which none of that is true.

One sure sign that you are straying into reverse-centaur territory is when a disproportionate amount of your time is spent on pattern recognition (eg basing an email/piece of coding/valuation report on an earlier email/piece of coding/valuation report dealing with a similar problem). That approach was always predicated on being able to interact with a more experienced human who understood what was involved in the task at some peer review stage. But it falls apart when there is no human to discuss the earlier piece of work with, because the human no longer works there, or a human didn’t produce the earlier piece of work. The fake it until you make it approach is not going to work in environments like these where you are more likely to fake it until you break it. And pattern recognition is something an AI system will always be able to do much better and faster than you.

Instead, question everything using the capabilities you have developed. If you are going to be put into potentially compromising situations in terms of the responsibilities you are implicitly taking on, the decisions needing to be made and the limitations of the available knowledge and assumptions on which those decisions will need to be based, then this needs to be made explicit, to yourself and the people you are working with. Clarity will help the company which is trying to use these new tools in a responsible way as much as it helps you. Learning is going to be happening for them as much as it is for you here in this new landscape.

And if the company doesn’t want to have these discussions or allow you to hamper the “efficiency” of their processes by trying to regulate them effectively? Then you should leave as soon as you possibly can professionally and certainly before you become their moral crumple zone. No job is worth the loss of your professional reputation at the start of your career – these are the risks companies used to protect their senior people of the future from, and companies that are not doing this are clearly not thinking about the future at all. Which is likely to mean that they won’t have one.

To return to Cory Doctorow:

Science fiction’s superpower isn’t thinking up new technologies – it’s thinking up new social arrangements for technology. What the gadget does is nowhere near as important as who the gadget does it for and who it does it to.

You are going to have to be the generation who works these things out first for these new AI tools. And you will be reshaping the industrial landscape for future generations by doing so.

And the job of the university and further education sectors will increasingly be to equip you with both the skills and the capabilities to manage this process, whatever your course title.