I didn’t understand statistics until I started taking actuarial exams that required me to master particular statistical techniques, decide which ones were appropriate to the problem I was looking at, apply them accordingly and be able to interpret what the results did and didn’t tell me. An A at GCE O level in maths, and A at GCE A level in both maths and further maths and a maths degree from Oxford did not give me those abilities.

I didn’t understand economics until I started putting together modules which could be taught on both BSc and MSc courses and then teaching them. My economics module on the way to qualifying as an actuary, based very heavily on Economics by Begg, Fischer and Dornbusch, did not give me that understanding.

There is a pattern here: the most impactful experiences we have are frequently at a bit of a distance from the credentials we present to the world. Your education is not a paragraph on your CV, it is your lived experience, sometimes assisted by, sometimes actively hindered by and often pursued completely independently of the educational institutions you have had a relationship with during your life.

Daniel Susskind has marched into this often fraught relationship between credentials and actual actionable skills, knowledge and experience in the last lecture of his Gresham College series on The Future of Work, called Education – And Its Limits. His analysis is a very clear expression of the problems that will be created if AI systems prove to be half as capable and long-lasting as people from OpenAI and Anthropic are telling us they will be.

Susskind argues that trying to future-proof students through education was a hopeless task and that working on the assumption of unresolvable uncertainty was the better way forward. He suggests a “no regret” strategy for education would focus on the basics, which he describes as literacy, numeracy and critical thinking, and the critical use of AI. Others in the audience suggested some other “basics”: communication skills for instance.

The other strand of Susskind’s basics was critical use of AI. And his challenge to the audience was whether we can teach AI without losing the basics in the process.

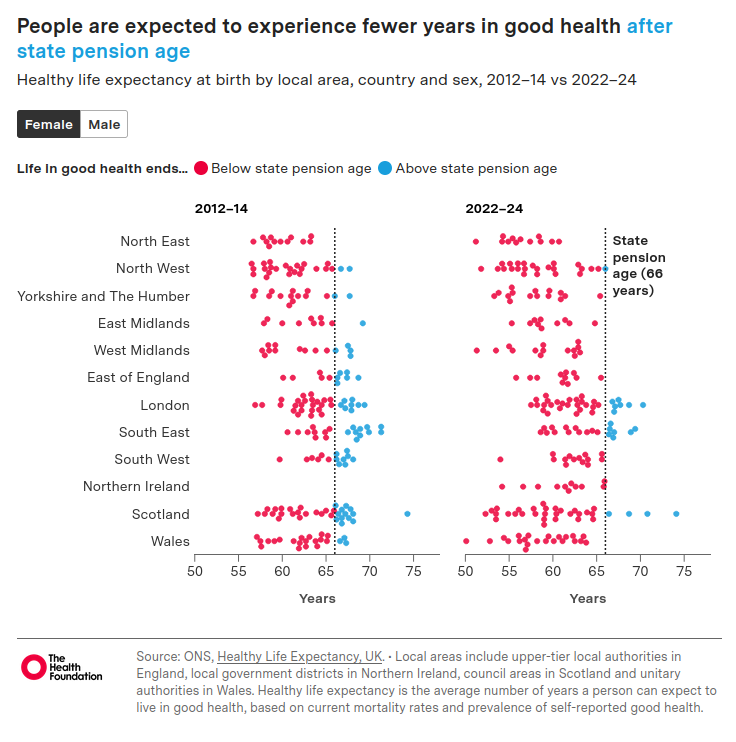

After a bit about the need to make continuing education in later life more accessible, he looked at his list from a previous lecture (which I briefly touched on here) of problems for a post AI future:

- Distribution (replacing wages);

- Contribution (how do you “pull your weight”);

- Power (domination by Big Tech on economics, politics, liberty, social justice and democracy); and

- Meaning (fulfilment in life).

And this is why I continue to watch Susskind’s output, because he is the unusual combination of an extremely orthodox economist (see his book Growth: A Reckoning for proof of this) and someone who has been wrestling with the challenges of more capable systems to our way of doing things for over 10 years. What Susskind gives you is a peek at how the actual economists advising our governments would deal with things if OpenAI and Anthropic are proved right. It is as if you had someone who both thought, as Ben Bernanke, Chair of the Federal Reserve, did in October 2007, that “the banking system is healthy” and also that the banking collapse was going to happen anyway.

Because what he reveals is that, if Anthropic are right, orthodox economists have really got no policy prescriptions worthy of the name.

On 1. Distribution: Susskind acknowledges that, if the labour market could not redistribute wealth effectively any more, then a bigger state would be needed to do so (but he was at pains to emphasise that this would not be the 20th century central planning type of state).

On 2. Contribution: Susskind thinks that perhaps we should allow people to make non-economic contributions! As if all of the activity in society which economists routinely ignore really wasn’t already happening!

On 3. Power: Susskind says the political power of Big Tech with regard to liberty, social justice and democracy is a problem. We have anti-trust legislation that can deal with Big Tech’s economic power, but not its political power. I don’t know what kind of political power he thinks Big Tech would have without the economic power that has been granted it by a steady erosion of that anti-trust legislation over recent years.

On 4. Meaning: he has little to say other than something about us currently having policies for work but not for leisure.

And in all of this, there is the implicit underlying assumption of a stable future environment for all of this tech to operate within.

For me it brought to mind something a friend of mine who was in a tent with Cory Doctorow at the How The Light Gets In festival at Hay-on-Wye last week. “He has a theory of change” he said.

He really does, including about how to break the economic power of the Big Tech companies. You all need to read Enshittification for the full account, but my review of the book here is a sneaky peek.

Susskind really really does not have a theory of change. Which tells me that the economics profession does not have one either.

However I continue to watch him as I find he goads me into thinking what some better answers might be to the questions he asks. And perhaps also a better question than whether we can teach AI without losing the basics in the process. I think a better question would be what do we need to learn when the future is uncertain.

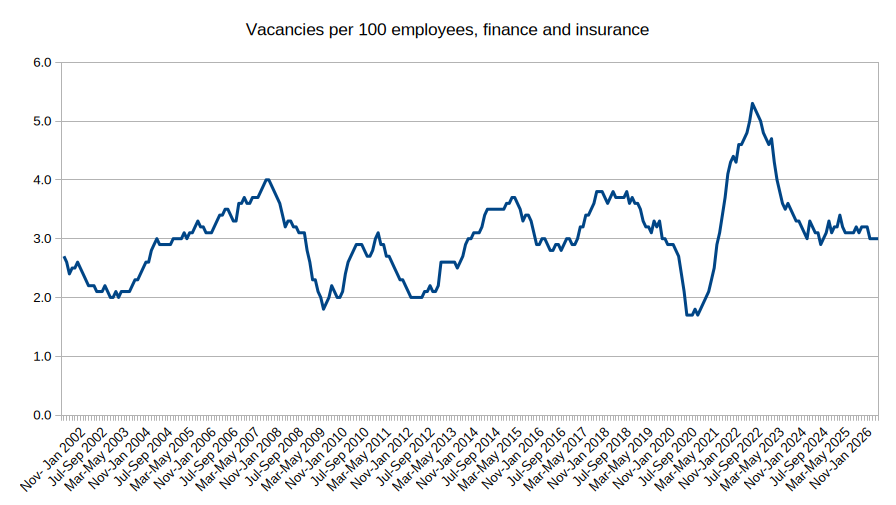

First of all, let’s remind ourselves of the problem. If noone cares how your advice was constructed, but your client can get advice that ticks the compliance box more cheaply and quickly from an AI system, while the experienced professional still has some role in managing the process, it may increasingly be a struggle to justify the cost of the junior colleague. So the future education system is going to need to help that future junior colleague demonstrate their value in ways they haven’t historically needed to. AI has not brought new problems, it has accelerated existing ones. And the gap between actual actionable skills, knowledge and experience and the credentials which are supposed to represent them is currently the key one as far as that future junior colleague is concerned.

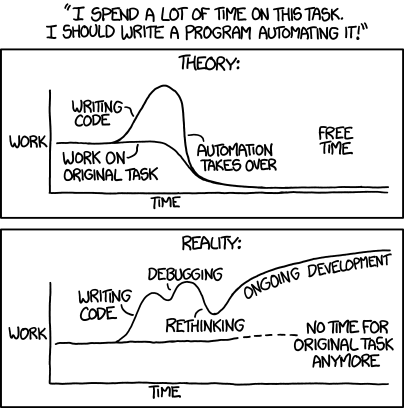

The temptation is to rush into syllabus changes towards what currently looks like the cutting edge activity. I agree with Susskind here that this would be a mistake. He cites the example of Michael Gove, amongst many other education ministers at the time, mandating the teaching of coding in 2014. Now we find that the new AI systems (despite the problems highlighted by Hannah Fry, Kyle Kingsbury and others I talked about here) are most suited to writing code and Anthropic claim that Claude is now writing 80% of its own code. Programmers are saying that, on the famous XKCD cartoon above, they are now living on the theory curve.

But both higher education institutions and the professions who still want to be in the game of developing the next generation of professionals can do a lot more both to reduce the gap between credentials and actual actionable skills, knowledge and experience and to make it clearer to employers that they have done so. That will be the subject of my next post.